I had a professor in college that said when an AI problem is solved, it is no longer AI.

Computers do all sorts of things today that 30 years ago were the stuff of science fiction. Back then many of those things were considered to be in the realm of AI. Now they’re just tools we use without thinking about them.

I’m sitting here using gesture typing on my phone to enter these words. The computer is analyzing my motions and predicting what words I want to type based on a statistical likelihood of what comes next from the group of possible words that my gesture could be. This would have been the realm of AI once, but now it’s just the keyboard app on my phone.

The approach of LLMs without some sort of symbolic reasoning layer aren’t actually able to hold a model of what their context is and their relationships. They predict the next token, but fall apart when you change the numbers in a problem or add some negation to the prompt.

Awesome for protein research, summarization, speech recognition, speech generation, deep fakes, spam creation, RAG document summary, brainstorming, content classification, etc. I don’t even think we’ve found all the patterns they’d be great at predicting.

There are tons of great uses, but just throwing more data, memory, compute, and power at transformers is likely to hit a wall without new models. All the AGI hype is a bit overblown. That’s not from me that’s Noam Chomsky https://youtu.be/axuGfh4UR9Q?t=9271.

I’ve often thought LLMs could replace all of the C-suites and upper and middle management.

Funny how no companies push that as a possibility.

I almost expect that we’ll see some company reveal it has been letting an AI control the top level decision making for the business itself, including if and when to reveal the AI.

But the funny thing will be that all the executives and board members still have jobs and huge stock awards. They will all pat each other on the back for getting paid more money to do less work, by being bold and taking a risk to let the computer do half their job for them.

There’s a name for it the phenomenon: the AI effect.

I make DNNs (deep neural networks), the current trend in artificial intelligence modeling, for a living.

Much of my ancillary work consists of deflating/tempering the C-suite’s hype and expectations of what “AI” solutions can solve or completely automate.

DNN algorithms can be powerful tools and muses in scientific endeavors, engineering, creativity and innovation. They aren’t full replacements for the power of the human mind.

I can safely say that many, if not most, of my peers in DNN programming and data science are humble in our approach to developing these systems for deployment.

If anything, studying this field has given me an even more profound respect for the billions of years of evolution required to display the power and subtleties of intelligence as we narrowly understand it in an anthropological, neuro-scientific, and/or historical framework(s).

Yup.

I don’t know why. The people marketing it have absolutely no understanding of what they’re selling.

Best part is that I get paid if it works as they expect it to and I get paid if I have to decommission or replace it. I’m not the one developing the AI that they’re wasting money on, they just demanded I use it.

That’s true software engineering folks. Decoupling doesn’t just make it easier to program and reuse, it saves your job when you need to retire something later too.

Their goal isn’t to make AI.

The goal of both the VCs and the startups is to make money. That’s why.

It’s not even to make money, they already do that. They need GROWTH. More money this quarter than last or the stockholders don’t get paid.

Growth doesn’t mean revenue over cost anymore, it just means number go up. The easiest way to create growth from nothing is marketing tulips to venture capital and retail investors.

The people marketing it have absolutely no understanding of what they’re selling.

Has it ever been any different? Like, I’m not in tech, I build signs for a living, and the people selling our signs have no idea what they’re selling.

The worrying part is the implications of what they’re claiming to sell. They’re selling an imagined future in which there exists a class of sapient beings with no legal rights that corporations can freely enslave. How far that is from the reality of the tech doesn’t matter, it’s absolutely horrifying that this is something the ruling class wants enough to invest billions of dollars just for the chance of fantasizing about it.

Sounds about right. There are some valid and good use cases for “AI”, but the majority is just buzzword marketing.

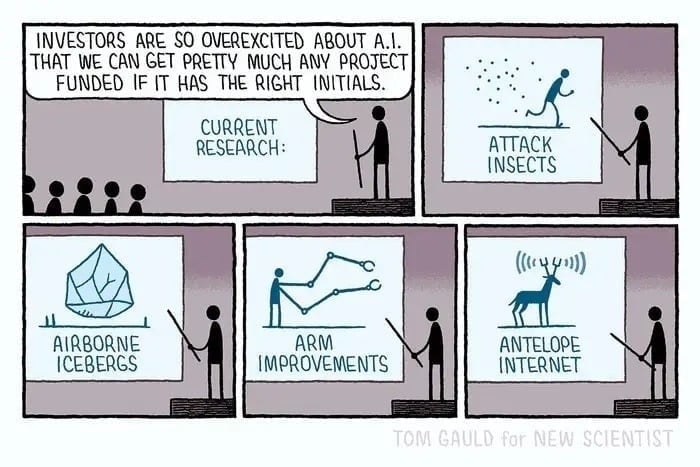

I have lots of uses for Attack Insects….

deleted by creator

deleted by creator

That’s about right. I’ve been using LLMs to automate a lot of cruft work from my dev job daily, it’s like having a knowledgeable intern who sometimes impresses you with their knowledge but need a lot of guidance.

watch out; i learned the hard way in an interview that i do this so much that i can no longer create terraform & ansible playbooks from scratch.

even a basic api call from scratch was difficult to remember and i’m sure i looked like a hack to them since they treated me as such.

In addition, there have been these studies released (not so sure how well established, so take this with a grain of salt) lately, indicating a correlation with increased perceived efficiency/productivity, but also a strongly linked decrease in actual efficiency/productivity, when using LLMs for dev work.

After some initial excitement, I’ve dialed back using them to zero, and my contributions have been on the increase. I think it just feels good to spitball, which translates to heightened sense of excitement while working. But it’s really just much faster and convenient to do the boring stuff with snippets and templates etc, if not as exciting. We’ve been doing pair programming lately with humans, and while that’s slower and less efficient too, seems to contribute towards rise in quality and less problems in code review later, while also providing the spitballing side. In a much better format, I think, too, though I guess that’s subjective.

I mean, interviews have always been hell for me (often with multiple rounds of leetcode) so there’s nothing new there for me lol

Same here but this one was especially painful since it was the closest match with my experience I’ve ever encountered in 20ish years and now I know that they will never give me the time of day again and; based on my experience in silicon valley; may end up on a thier blacklist permanently.

Blacklists are heavily overrated and exaggerated, I’d say there’s no chance you’re on a blacklist. Hell, if you interview with them 3 years later, it’s entirely possible they have no clue who you are and end up hiring you - I’ve had literally that exact scenario happen. Tons of companies allow you to re-apply within 6 months of interviewing, let alone 12 months or longer.

The only way you’d end up on a blacklist is if you accidentally step on the owners dog during the interview or something like that.

Being on the other side of the interviewing table for the last 20ish years and being told that we’re not going to hire people that everyone unanimously loved and we unquestionably needed more times that I want to remember makes me think that blacklists are common.

In all of the cases I’ve experienced in the last decade or so: people who had faang and old silicon on their resumes but couldn’t do basic things like creating an ansible playbook from scratch were either an automatic addition to that list or at least the butt of a joke that pervades the company’s cool aide drinker culture for years afterwards; especially so in recruiting.

Yes they’ll eventually forget and I think it’s proportional to how egregious or how close to home your perceived misrepresentation is to them.

I think I’ve probably only ever been blacklisted once in my entire career, and it’s because I looked up the reviews of a company I applied to and they had some very concerning stuff so I just ghosted them completely and never answered their calls after we had already begun to play a bit of phone tag prior to that trying to arrange an interview.

In my defense, they took a good while to reply to my application and they never sent any emails just phone calls, which it’s like, come on I’m a developer you know I don’t want to sit on the phone all day like I’m a sales person or something, send an email to schedule an interview like every other company instead of just spamming phone calls lol

Agreed though, eventually they will forget, it just needs enough time, and maybe you’d not even want to work there.

What happened to Linus? He looks so old now…

He got old.

Not especially old, though; he looks like a 54yo dev. Reminds me of my uncles when they were 54yo devs.

As a 46 year old dev I’m starting to look that way too.

I guess having 3 kids will do that to you.

That, and developing software for 30+ years.

That and leading an open source project for 30 years.

THE open source project.

Whether you’re leading a project or not, time will have pretty much the same impact. He’s in his mid-50s, and he looks pretty good for that age.

I mean he’s aging quite well given his position… Many people burn out way earlier.

[citation needed]/s

That’s an excessive amount of aging is what folks are seeing. Not that he’s just old.

He’s lost a lot of weight in 4 years so that’s probably exacerbating the wtf.

He’s 54, I think he looks pretty average for that age. He looks like an old dad, because he is.

he aged

Source?

What happened to he is happening now to you.

If you find out what happened, let me know, because I think it’s happening to me too.

People age. You don’t look the same as in 2010 either, I know that without having any idea what you look like.

He’s 54 years old

Oxidative stress is a bitch

He has a real Michael McKean vibe

Wow, yeah that’s a big difference from how I remember him

Time

It’s like he aged 10 years in the past 2 years… damn

AI as we know it does have its uses, but I would definitely agree that 90% of it is just marketing hype

The image generation features are fun, even though you have to browbeat the idiot AI into following the description.

You just haven’t tried OpeningAI’s latest orione model. A company employee said it is soooo smart, can you believe it? And the government is like, goddamn we are so scareded of it. Im telling you AGI december 2024, you’ll will see!

Edit:

Is it so hard for people to see sarcasm?

Year of the Linux Deskto…oh wait wrong thread, same same though. If we just wait one more year, we’ll have FULL FSD!

Next year, I promise, is the year we all switch to crypto, just wait!

In just two years, no one will be driving 4,000lb cars anymore, everyone just needs a Segway.

We’re going to have “just walk out” grocery stores in two years, where you pick items off the shelf, and

10,000 outsourced Indians will review your purchase and complete your CC transaction in about a half hour.our awesome technology will handle everything, charging you for your groceries as you leave the store, in just two more years!I really thought by making intentional mistakes in my comment people would be able to see the OBVIOUS sarcasm, but I guess not…

I think when the hype dies down in a few years, we’ll settle into a couple of useful applications for ML/AI, and a lot will be just thrown out.

I have no idea what will be kept and what will be tossed but I’m betting there will be more tossed than kept.

AI is very useful in medical sectors, if coupled with human intervention. The very tedious works of radiologists to rule out normal imaging and its variants (which accounts for over 80% cases) can be automated with AI. Many of the common presenting symptoms can be well guided to diagnosis with some meticulous use of AI tools. Some BCI such as bioprosthosis can also be immensely benefitted with AI.

The key is its work must be monitored with clinicians. As much valuable the private information of patients is, blindly feeding everything to an AI can have disastrous consequences.

I recently saw a video of AI designing an engine, and then simulating all the toolpaths to be able to export the G code for a CNC machine. I don’t know how much of what I saw is smoke and mirrors, but even if that is a stretch goal it is quite significant.

An entire engine? That sounds like a marketing plot. But if you take smaller chunks let’s say the shape of a combustion chamber or the shape of a intake or exhaust manifold. It’s going to take white noise and just start pattern matching and monkeys on typewriter style start churning out horrible pieces through a simulator until it finds something that tests out as a viable component. It has a pretty good chance of turning out individual pieces that are either cheaper or more efficient than what we’ve dreamed up.

AI is like the calculator for the mathematician. A very useful tool that allows you to be more efficient but is completely useless without someone capable of handling it.

and then simulating all the toolpaths to be able to export the G code for a CNC machine. I don’t know how much of what I saw is smoke and mirrors, but even if that is a stretch goal it is quite significant.

<sarcasm> Damn, I ascended to become an AI and I didn’t realise it. </sarcasm>

Maybe in some places, but I just found this:

A Market place, where people can generate their ideas of jewellery and order them after. Makes life of goldsmiths and customers way more easy. I do not think aI will leave this project, for example.

Snort might actually be a good real world application that stands to benefit from ML, so for security there’s some sort of hopefulness.

I am thinking of deploying a RAG system to ingest all of Linus’s emails, commit messages and pull request comments, and we will have a Linus chatbot.

Hold on there Satan… let’s be reasonable here.

The only time I’ve seen AI work well are for things like game development, mainly the upscaling of textures and filling in missing frames of older games so they can run at higher frames without being choppy. Maybe even have applications for getting more voice acting done… If the SAG and Silicon Valley can find an arrangement for that that works out well for both parties…

If not for that I’d say 10% reality was being… incredibly favorable to the tech bros

^^

^^^

he isn’t wrong

If anything he’s being a bit generous.

I play around with the paid version of chatgpt and I still don’t have any practical use for it. it’s just a toy at this point.

I used chatGPT to help make looking up some syntax on a niche scripting language over the weekend to speed up the time I spent working so I could get back to the weekend.

Then, yesterday, I spent time talking to a colleague who was familiar with the language to find the real syntax because chatGPT just made shit up and doesn’t seem to have been accurate about any of the details I asked about.

Though it did help me realize that this whole time when I thought I was frying things, I was often actually steaming them, so I guess it balances out a bit?

I use shell_gpt with OpenAI api key so that I don’t have to pay a monthly fee for their web interface which is way too expensive. I topped up my account with 5$ back in March and I still haven’t use it up. It is OK for getting info about very well established info where doing a web search would be more exhausting than asking chatgpt. But every time I try something more esoteric it will make up shit, like non existent options for CLI tools

ugh hallucinating commands is such a pain

It’s useful for my firmware development, but it’s a tool like any other. Pros and cons.

No AI is a very real thing… just not LLMs, those are pure marketing

The latest llms get a perfect score on the south Korean SAT and can pass the bar. More than pure marketing if you ask me. That does not mean 90% of business that claim ai are nothing more than marketing or the business that are pretty much just a front end for GPT APIs. llms like claud even check their work for hallucinations. Even if we limited all ai to llms they would still be groundbreaking.

Korean SAT are highly standardized in multiple choice form and there is an immense library of past exams that both test takers and examiners use. I would be more impressed if the LLMs could show also step by step problem work out…

Claud 3.5 and o1 might be able to do that; if not, they are close to being able to do that. Still better than 99.99% of earthly humans

You seem to be in the camp of believing the hype. See this write up of an apple paper detailing how adding simple statements that should not impact the answer to the question severely disrupts many of the top model’s abilities.

In Bloom’s taxonomy of the 6 stages of higher level thinking I would say they enter the second stage of ‘understanding’ only in a small number of contexts, but we give them so much credit because as a society our supposed intelligence tests for people have always been more like memory tests.

Exactly… People are conflating the ability to parrot an answer based on machine-levels of recall (which is frankly impressive) vs the machine actually understanding something and being able to articulate how the machine itself arrived at a conclusion (which, in programming circles, would be similar to a form of “introspection”). LLM is not there yet

Like with any new technology. Remember the blockchain hype a few years back? Give it a few years and we will have a handful of areas where it makes sense and the rest of the hype will die off.

Everyone sane probably realizes this. No one knows for sure exactly where it will succeed so a lot of money and time is being spent on a 10% chance for a huge payout in case they guessed right.

There’s an area where blockchain makes sense!?!

Git is a sort of proto-blockchain – well, it’s a ledger anyway. It is fairly useful. (Fucking opaque compared to subversion or other centralized systems that didn’t have the ledger, but I digress…)

Cryptocurrencies can be useful as currencies. Not very useful as investment though.

It has some application in technical writing, data transformation and querying/summarization but it is definitely being oversold.

Yep, Ik ai should die someday.

Copilot by Microsoft is completely and utterly shit but they’re already putting it into new PCs. Why?

Investors are saying they’ll back out if no AI in products. So tech leaders will talk talk and all deal with ai.

Copilot + Pcs tho…